This page examines the reliability of microRNA (miRNA) expression data and its inherent limitations.

Techniques for measuring gene expression (mRNA) reached a high standard of reliability at least by 2004. Data produced by skilled experimenters can generally be trusted. On the other hand, measuring microRNA (miRNA) expression remains a significant challenge even today. It is crucial to understand that miRNA data is often not as consistent or reliable as mRNA data.

Here, we compare gene and miRNA expression data across multiple datasets for Hepatocellular Carcinoma (HCC).

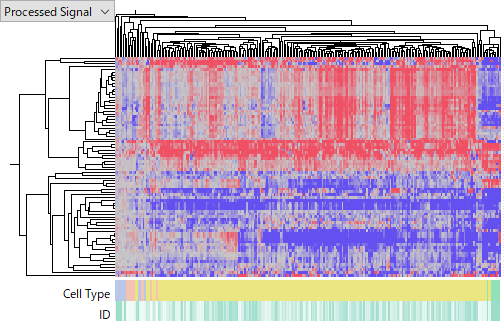

Example: Gene Expression Datasets (mRNA)

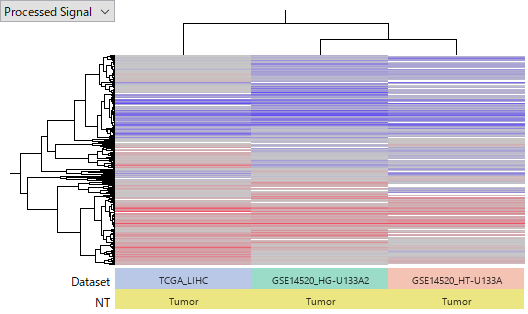

The heatmap below compares two datasets: TCGA-LIHC and GSE14520. The TCGA data was generated via RNA-Seq, while GSE14520 used two types of Affymetrix GeneChips (HG-U133A 2.0 and HT_HG-U133A). We reprocessed the raw data into Log2 Ratios (Tumor vs. Normal). Red indicates up-regulation in tumors, while blue indicates down-regulation.

Despite originating from different researchers using different platforms, the patterns of up- and down-regulation across these three datasets are remarkably consistent. This demonstrates the high reliability of mRNA expression data.

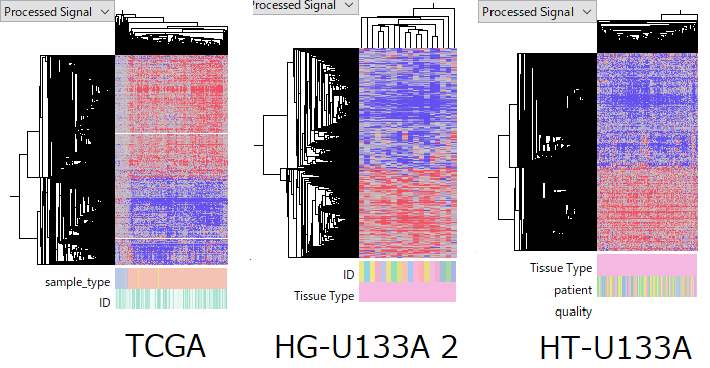

The consistency within each data set.

TCGA-LIHC RNA-Seq data set is composed of 50 normal and 370 primary tumor samples. GSE14520 HG-U133A 2.0 Array data set contains 18 normal-tumor pairs from same patients. And HT-U133A data set does 214 pairs. Although the quality of some samples are arguable, the overall quality is fairly good.

Anyway, tumor samples' expression profiles are roughly similar in all data sets. This fact gives you a reasonable confidence that the data reflects the true gene expression status.

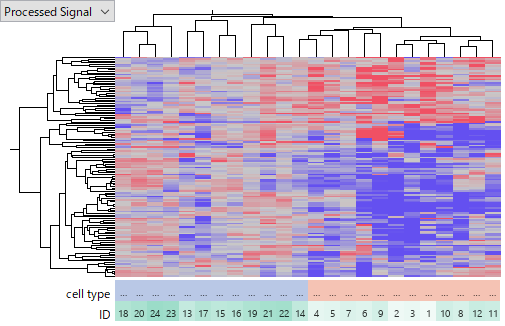

Example: microRNA Expression Datasets

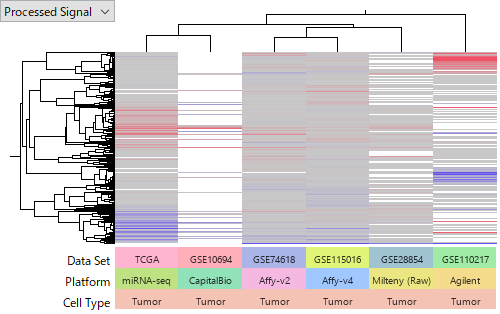

Next, let’s look at the miRNA expression datasets. Unlike the gene expression examples, the results across different datasets barely align. This highlights the difficulty in interpreting miRNA expression data.

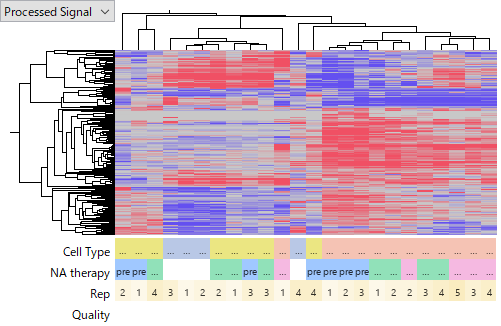

The consistency (or inconsistency) within each data set.

While TCGA-LIHC miRNA-Seq shows internal consistency among tumor samples, other datasets like GSE110217 (Agilent) reveal significant quality issues.

In GSE110217, the signal intensities in the latter half of the replicates (5-8) were markedly lower than the first half (1-4), likely due to variations in experimental skill.

Even after excluding low-quality samples, the miRNAs identified as up- or down-regulated in these datasets show almost no overlap with the TCGA results. The discrepancy is too vast to be explained simply by platform differences or gene mapping inconsistencies.

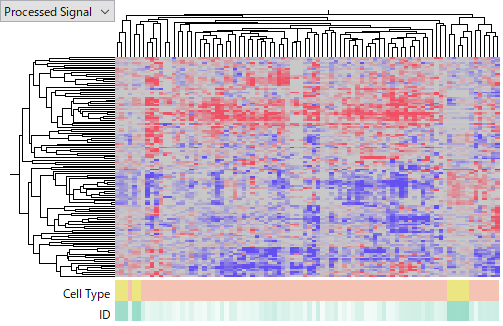

The following heatmaps are of GSE74618 on Affymetrix miRNA v2 Array,

GSE115016 on Affymetrix miRNA v4 Array,

GSE10694 on CapitalBio Mammalian miRNA Array,

and GSE28854 on Milteny Biotec miRXplore miRNA Microarray.

You see that the latter one is more noisier and less concordant among HCC samples. I don't intend to judge which platform is better of worse, because it can be due to technology or experimenters' skill or other factors we don't know. My point is that miRNA expression data is far less reliable than gene expression's. Don't you think that it is very hard to say which miRNA is really up- or down-regulated in HCC?

Why is miRNA Measurement So Difficult?

This inconsistency stems from the following technical and biological factors, which remain relevant even with the advanced technologies of 2026:

- Short Sequences and High Similarity

miRNAs are very short (approx. 22 nucleotides), and family members often differ by only one or two bases. This leads to cross-hybridization in microarrays and mapping ambiguities in sequencing. Furthermore, we now recognize a significant "Library Preparation Bias"—where differences in extraction kits and adapter ligation efficiency distort the true expression profile far more than in mRNA-Seq. - Limited Number of Species (Small Population)

Compared to tens of thousands of mRNAs, there are only a few hundred to a thousand detectable miRNAs. This breaks the fundamental assumption of most normalization methods (e.g., "most genes do not change"). Because any computational normalization remains questionable, precise comparison often requires the use of "Spike-ins" (external RNA controls) to provide a stable baseline.

Conclusion: A Need for Critical Thinking

Comprehensive miRNA measurement is an evolving technology that still faces unresolved hurdles. When using miRNA datasets or relying on "up-regulated miRNA lists" from published papers, extreme caution is required.

If you are planning a miRNA experiment, remember that it demands even higher technical proficiency than gene expression studies. If you would like to discuss your data or experimental design with us, please feel free to reach out via our Contact Us page.

Download the data for your Subio Platform.

If you want to look closer these data sets with Subio Platform by yourself, download the SOA file which works like a bundle of SSA files.

Open "Import Archive..." under Platform menu, and select the SOA file. Subio Platform automatically shuts down when it completes importing. Please restart the software to see the all data sets.

[2026 Update] Never Ask AI, "Which miRNAs are Up-regulated in X?"

While AI-driven analysis is becoming more common in 2026, it is dangerous to blindly accept the "answers" provided by AI when the quality of the underlying data varies so drastically.

For instance, if you ask an AI, "Which miRNAs are up-regulated in HCC (Hepatocellular Carcinoma)?", it will confidently present a list extracted from past publications. However, the technical reality demonstrated in this article is that results vary significantly from paper to paper, often incorporating data of questionable reliability.

Furthermore, AI models can extract patterns even from noisy data, sometimes identifying features that may not reflect true biological signals. Ultimately, the responsibility for determining whether these results are biologically valid lies not with the AI, but with the analyst.

It is also important to note that the same caution applies to databases such as miRmine and miRNAMap, which aggregate expression levels across various tissues. While these databases can be useful, they often integrate data generated under different experimental conditions, making it difficult to distinguish biological differences from technical variation.

For omics data analysis, it is essential to first examine the data directly, rather than relying solely on AI-generated answers.