"Since I normalized with DESeq2 or TPM, my data must be clean and ready for analysis." — Are you moving forward with this assumption? In reality, non-linear biases that cannot be removed by simple correction often lead to erroneous conclusions in omics data analysis. In this article, let’s use a real-world dataset (GSE159751) to learn the critical importance of validating data distribution using visualization tools. While the debate between TPM vs. FPKM continues, the practical difference is negligible; for most studies, either will suffice.

Why "Distribution Shifts" Persist After Normalization

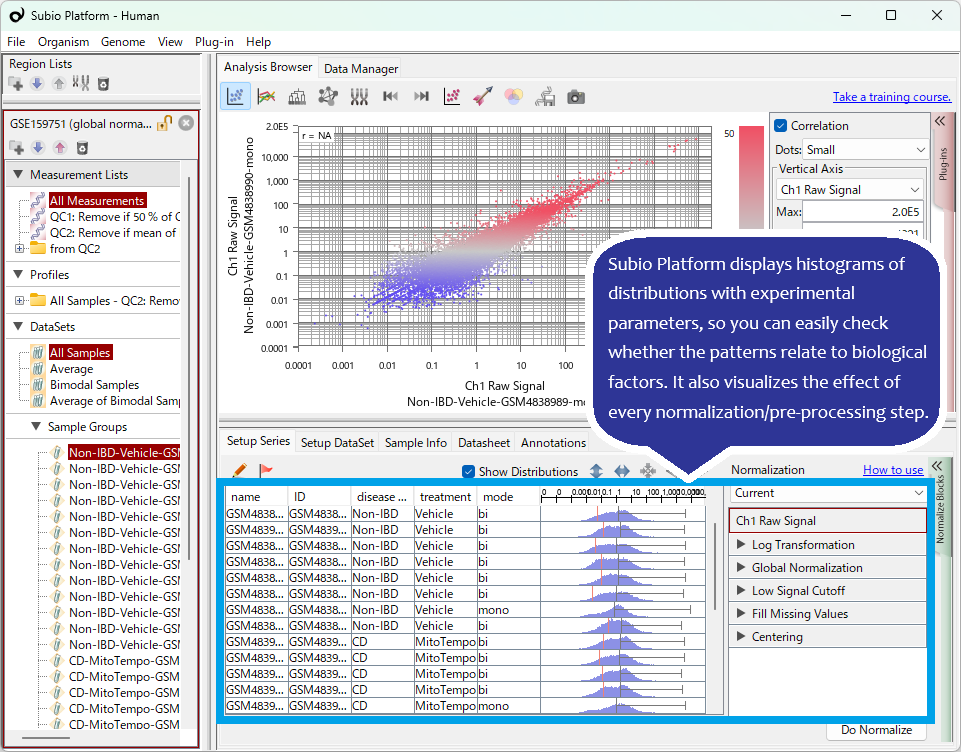

Even after "per million" normalization, TPM/FPKM distributions often show significant shifts between samples. Furthermore, this specific dataset exhibits non-linear biases in FPKM distribution shapes (unimodal vs. bimodal). A shift to a unimodal distribution can often be a red flag for RNA degradation. If you are analyzing with Subio Platform, identifying these suspicious samples is straightforward. The key is that the analyst must understand these anomalies, decide how to handle them, and interpret the results based on that judgment. This is a crucial step often overlooked by those who neglect visualization.

Quantile Normalization is Not a Panacea

Another vital point is evaluating the ability of "advanced" algorithms to remove non-linear bias. Here, we applied Quantile Normalization to forcibly unify the FPKM distributions. While this made the distribution shapes appear similar, it contributed nothing to the actual removal of non-linear systematic errors.

What Experimental Biologists Should Do Before Relying on Algorithms

Thanks to bioinformaticians, many algorithms are available to tackle highly complex challenges. However, wise experimental biologists must remember that they are responsible for verifying whether those tools actually work for their specific data. At this stage, generating high-quality raw data through superior experimental design and monitoring the process with accurate tools is far more effective than blindly relying on "advanced" algorithms.

When strong systematic errors enter your data, removing their influence becomes difficult or even impossible. To avoid such pitfalls, Subio believes in the importance of pre-assessment of the chosen measurement technology and experimental planning that mitigates risk.

Don't wait until the data is out to regret it. With experience handling thousands of "failed datasets," Subio can propose experimental designs that ensure success. Let us provide a professional assessment of your plan before you start your experiments. [Contact us here].