Understanding the Difference Between TPM and FPKM Is Not Enough for RNA-Seq Analysis

TPM and FPKM are commonly seen as expression values in RNA-Seq data. Many people search for terms such as “TPM FPKM,” “FPKM vs TPM,” or “how to use TPM for expression analysis.”

TPM and FPKM are both expression measures calculated by adjusting read counts for gene length and sequencing depth. Broadly speaking, FPKM first adjusts for gene length and then accounts for library size, whereas TPM is calculated so that the total expression value within each sample is normalized to the same scale. For this reason, TPM can be easier to interpret when comparing the relative expression levels of different genes within the same sample.

However, what matters in RNA-Seq analysis is not simply whether TPM or FPKM should be used. When differential expression analysis, comparisons between samples, data quality checks, and interpretation of results are considered together, understanding the difference between TPM and FPKM alone is not sufficient.

For Differential Expression Analysis, Use Gene Counts Instead of TPM or FPKM

In RNA-Seq differential expression analysis, Gene Counts should generally be used as the starting point, rather than TPM or FPKM. Common differential expression analysis methods such as DESeq2, edgeR, and limma-voom are designed to evaluate differences between groups based on Gene Counts, while taking library size and data distribution into account.

In contrast, TPM and FPKM are values that have already been adjusted for gene length and sequencing depth. Using these values as the main input for differential expression analysis does not fit well with the assumptions of standard RNA-Seq analysis methods. If the goal is differential expression analysis, the starting point should not be choosing between TPM and FPKM, but preparing Gene Counts.

This point is explained in more detail in the following article.

TPM, FPKM, and RPKM Should Not Be Used for Differential Expression Analysis|RNA-Seq DEG Analysis Should Start from Gene Counts

Even “Normalized” TPM or FPKM Values Cannot Always Be Trusted As They Are

What if you are not performing differential expression analysis, but instead using TPM or FPKM values distributed in a public database or supplementary data from a paper to examine overall sample trends?

Here, it is important to be careful with the word “normalized.” TPM and FPKM are often treated as normalized expression values. They do adjust read counts based on gene length and sequencing depth. However, this does not mean that the resulting data are automatically suitable for reliable comparison between samples.

TPM and FPKM adjust read counts based on gene length and total read counts. However, this adjustment does not actively correct distortions in the overall data distribution between samples. As a result, nonlinear distortions often observed in RNA-Seq data, such as differences between samples that vary across low- and high-expression ranges, can remain even after conversion to TPM or FPKM.

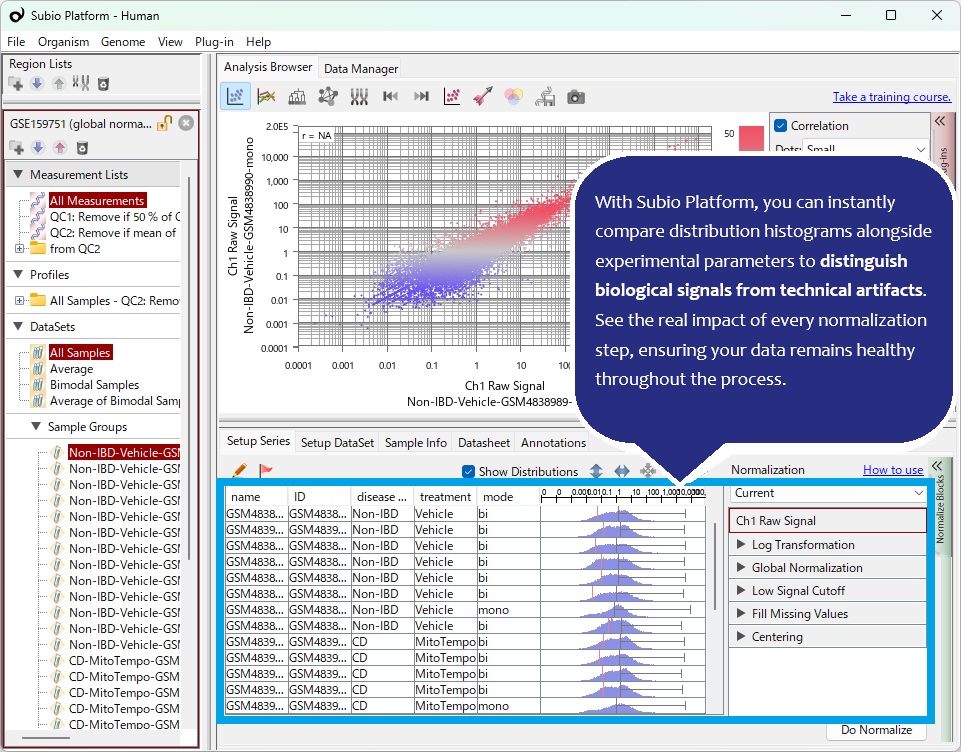

In other words, TPM and FPKM may be values that are easier to view than raw Gene Counts, but they are not necessarily values that can be trusted as they are for comparison between samples. In this article, we use the actual dataset GSE159751 to examine why checking the data before analysis remains important, even when the data have already been converted to TPM or FPKM.

Nonlinear Distortions Can Remain Even After Converting to TPM or FPKM

TPM and FPKM correct expression values by considering factors such as read count and gene length. However, adjusting for differences in read count or data size is a linear normalization. It can partially correct for differences in data amount, but it cannot remove all complex distortions contained in RNA-Seq data.

RNA-Seq data are affected by multiple intertwined factors, including RNA quality, sample composition, variation among low-expression genes, and read bias toward specific gene groups. Therefore, differences among samples may appear not only as simple scaling differences, but also as differences in the shape of the entire distribution. Such nonlinear distortions are not resolved simply by converting the data to TPM or FPKM.

In this dataset, the shapes of the FPKM distributions differ substantially among samples. Some samples show distributions close to unimodal, indicating nonlinear distortion that cannot be handled by simple scaling correction.

Such distributional differences are easily overlooked if you only see that TPM or FPKM values have been output. However, if the data are properly visualized and checked, it is not difficult to notice samples with questionable quality or samples whose distributions differ greatly from the others.

What matters is that the analyst understands that questionable samples are included, decides how to handle them, and interprets the analysis results based on that decision. In analyses that neglect visualization, this kind of evidence can easily be missed.

Adding More Normalization or Correction Does Not Always Make the Data Clean

Then, should we simply apply another normalization or correction method to the distortions remaining in TPM or FPKM? In reality, it is not that simple. Even methods such as TMM, VST, ComBat, and Quantile Normalization do not always make the data clean in the expected way.

Normalization and correction are not magic procedures that mechanically restore data to a “correct” state. Depending on the type and magnitude of distortion in the original data, sample quality, batch structure, and how these factors overlap with group differences, interpretation problems may remain even after correction. Also, even if the corrected data look cleaner, there is no guarantee that the change reflects the original biological state.

For more details on the limitations of applying TMM, VST, ComBat, Quantile Normalization, and other methods to RNA-Seq data, see the follow-up article Limitations of Batch Effect Correction and Normalization in RNA-Seq.

Quantile Normalization Is Not a Cure-All

In this video, we also try Quantile Normalization to forcibly align the FPKM distributions. As a result, the distribution shapes among samples appear more similar at first glance. However, clustering shows that aligning the distributions alone does not solve the problem. In other words, even if the distribution looks cleaner, that does not mean the data have become suitable for biological comparison.

Assume Distortion Exists, and Find an Explainable Analysis Strategy

In RNA-Seq data analysis, nonlinear distortions and sample-to-sample differences are frequently present. Therefore, it is necessary to proceed with the mindset that such issues exist, and to decide how to handle them during the analysis.

What matters is not to ignore whether distortion exists, nor to expect that algorithms can completely remove it. You need to understand precisely what changed after applying a given method, and then consider which samples to use, which normalization or correction method to adopt, and how far the results can be interpreted as biological differences. RNA-Seq data analysis may be described as the process of finding an analysis strategy that can be explained to others, while working through this kind of ambiguity.

What Experimental Biologists Should Do Before Relying on Algorithms

Thanks to the efforts of bioinformaticians, many algorithms are now available for addressing highly complex problems. However, wise experimental biologists should not forget that they must verify for themselves whether an algorithm truly works for their own data.

At present, it is safer to proceed carefully with good experimental design and planning to obtain high-quality raw data, and with visualization tools that accurately monitor the state of the data, than to blindly rely on “advanced” algorithms.

Once strong systematic errors are introduced into the data, removing their effects and performing a reliable analysis may become difficult or even impossible. To avoid such situations, Subio considers pre-assessment of the measurement technology to be used and experimental planning to reduce risk to be important.

Before regretting it after the data are generated, let Subio assess your plan from a professional perspective. Based on our experience trying to rescue thousands of “failed datasets,” we can propose experimental plans designed to avoid failure. [Contact us]

What you need to learn is not command operation or how to use tools, but “data analysis.”

Related Topics

- Why TPM, FPKM, and RPKM Should Not Be Used for RNA-Seq Differential Expression Analysis | DEG Analysis Should Start from Gene Counts

- RNA-Seq PCA: When Samples Appear Separated — Check Data Biases Before Assuming Batch Effects

- Limitations of Batch Effect Correction and Normalization in RNA-Seq

- Getting Started with RNA-Seq Analysis (For Beginners): Understanding the Workflow and Key Concepts