"These samples are under the same conditions, so why is the variation between replicates larger than the group difference?"

If you've asked yourself this, you are facing the inevitable challenge of batch effects (systematic errors). Many researchers assume that "normalizing with CPM makes samples comparable," but the reality is that CPM correction itself can sometimes exacerbate data distortion. In this article, we use real-world data to explain how to properly monitor what is actually happening before relying on "advanced" correction algorithms.

Focus on "Distribution," Not Just "Numbers"

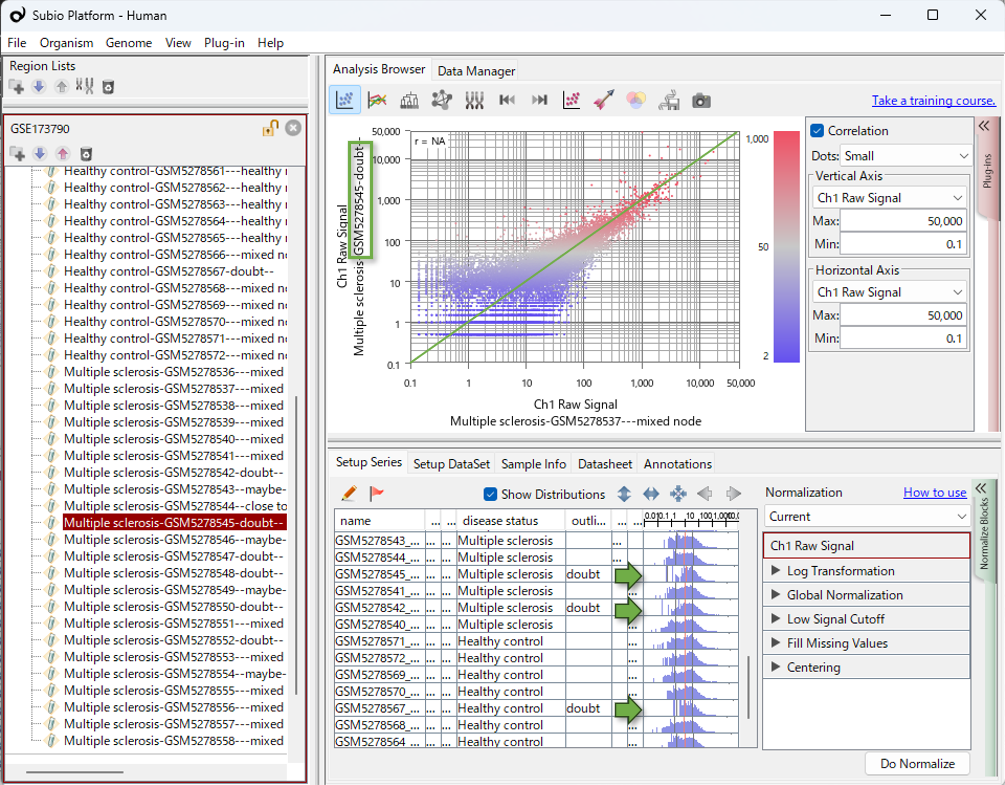

In Subio Platform, experimental parameters and histograms of value distributions are displayed in the same table. This makes it immediately obvious whether a pattern in the data relates to a biological factor or a technical batch. In RNA-Seq, samples with extremely low read counts may see their CPM values artificially shifted to the right (as seen in the histograms in this video). This can create the illusion of variation where none truly exists. Proceeding with analysis without visualizing these shifts carries a clear risk of extracting a false list of differentially expressed genes (DEGs).

Transcriptomics analysis relies on a fundamental assumption: most genes remain unchanged, while only a small fraction of genes are differentially expressed. Without this assumption, normalization becomes impossible. When histogram shapes differ significantly, it means this core assumption has been violated. In such cases, my personal stance is clear—it indicates a fatal error in the measurement process, and the only viable option is to exclude those samples from the analysis.

Batch Effects Can Occur in Multiple Layers

Even after removing obvious outliers and fulfilling the basic assumptions, the labyrinth of data analysis often continues. In the dataset where normalization should have worked perfectly, a strange cluster may suddenly emerge, forcing us to consider the presence of further batch effects.

To be specific, the Control group split into two distinct clusters: one showing a profile clearly distinct from the disease group, and the other closely mirroring it. This discovery presents two contradictory possibilities that no algorithm can resolve on its own:

- Biological Diversity (Clinical Factors): The control group may contain a mix of individuals—some representing a true "healthy" baseline and others already in a pre-disease state. If the data is accurately capturing this reality, these samples must never be excluded from the analysis.

- Batch Effects (Experimental Factors): While the cluster furthest from the disease group may look like a "normal" control, that very distinctness could be an "illusion" created by a batch effect. In this scenario, those samples should be excluded to reach the truth.

The Futility of "Cleaning" Data

In a situation like this, debating which statistical method will "solve" the problem or which algorithm makes the data look better is meaningless. No matter how many algorithms you change, the two contradictory possibilities presented by the data remain. What is required here is to look beyond the computer screen—to investigate the biological or pathological background of the Control group, and to verify the experimental logs to see exactly how the study was conducted.

Ultimately, the analyst must decide which stance to take. Even when clear evidence is nowhere to be found, there are moments when you must simply make a choice and commit to it. Whichever path you choose, that decision then becomes the logical foundation upon which all resulting data must be interpreted.

Saying this may draw criticism from those who insist that "objectivity is everything" in analysis, but "subjectivity"—the analyst's deliberate judgment—is absolutely essential. This is because, when we face the overwhelming complexity of Omics data, we never have enough time, money, knowledge, or experience to achieve a purely objective absolute.

What "Subtle Variations" Suggest: Complementary Use of Bulk and Single-Cell RNA-Seq

Suppose you determine that the Control cluster furthest from the disease group was merely a batch effect and exclude it. As a result, the expression difference between the remaining Control group and the disease group becomes very small.

In bulk RNA-Seq, which observes diverse cell populations as a single entity, it is only natural that significant changes occurring in only a small sub-population will appear as a "slight difference." It might seem logical to conclude, "We should re-do the experiment using single-cell RNA-Seq (scRNA-Seq) or cell sorting to isolate specific cells."

However, there is a crucial perspective that must not be overlooked: the fact that methods like single-cell analysis have significantly lower precision and sensitivity compared to bulk analysis. Rather than struggling to chase signals in noisy, cutting-edge technologies, it may be more effective to focus on the "reliably existing differential expression" in high-precision bulk RNA-Seq—even if it is small—and carefully pick it up. Instead of an "either-or" choice, the option of combining both approaches should be taken into consideration.

The True Role of an Analyst

The process described above is a reflection of my own thoughts and reasoning while working with this data; I am not suggesting that this is the "correct" answer.

My point is that the true role of an analyst is to engage in this kind of deep thinking. When multiple possibilities cannot be narrowed down, the analyst’s job is to clearly present the underlying reasons along with the results of each potential scenario—to facilitate collaborative discussions that bring together team leaders, experimentalists, and pathologists. Mastering sophisticated statistical tools is not the essence of omics data analysis.

Mastering Data Analysis is a Journey

To truly "learn data analysis" means to gain experience by actually facing and analyzing various, messy datasets. However, this kind of expertise is not something that can be acquired overnight; it typically takes a massive amount of time and dedicated practice.

How Subio Supports Your Growth

At Subio, we provide practical, hands-on learning through online training sessions where we work together using your actual data. While it depends on the individual, building a solid foundation typically requires about 3 to 6 personalized sessions over the course of a year.

For those who do not have that kind of time, we recommend our Data Analysis Service . But make no mistake—this is not just another outsourcing service. Subio’s service is designed to help clients understand every data characteristic and analysis step, empowering you to make the final judgment and reach your own conclusions. If you are interested in a partnership that values insight over automation, please feel free to contact us .

Master Analysis, Not the Tool.

Related Topics

- Don’t trust TPM/FPKM/RPKM too much. They don’t promise to cancel the systematic error of RNA-Seq data.

- Beyond Plugins: Why Subio Platform’s Core Features are Your Ultimate Analysis Partner

- The systematic error is inevitable for the omics experiment.

- What is the most effective way to learn the omics data analysis?